Short answer: You should not paste raw client or personal data into ChatGPT. But many small businesses already rely on ChatGPT for real work. The real question isn't "should I ever use it?"—it's: "What can actually go wrong, and how do people handle this responsibly?"

This guide explains real incidents that have already happened, why large companies prohibit this internally, and what a realistic, defensible workflow looks like for small teams.

When you paste text into ChatGPT, the data leaves your device, is processed on external servers, and may be stored or retained. You lose direct control over retention, access, and future use. Even if the AI provider does everything right, risk doesn't disappear—it just moves outside your control.

What Happens When You Paste Client Data Into ChatGPT

When you paste text into a public AI tool like ChatGPT:

- The data leaves your device

- It is processed on external servers

- It may be stored, logged, or retained depending on service settings

- You lose direct control over retention, access, and future use

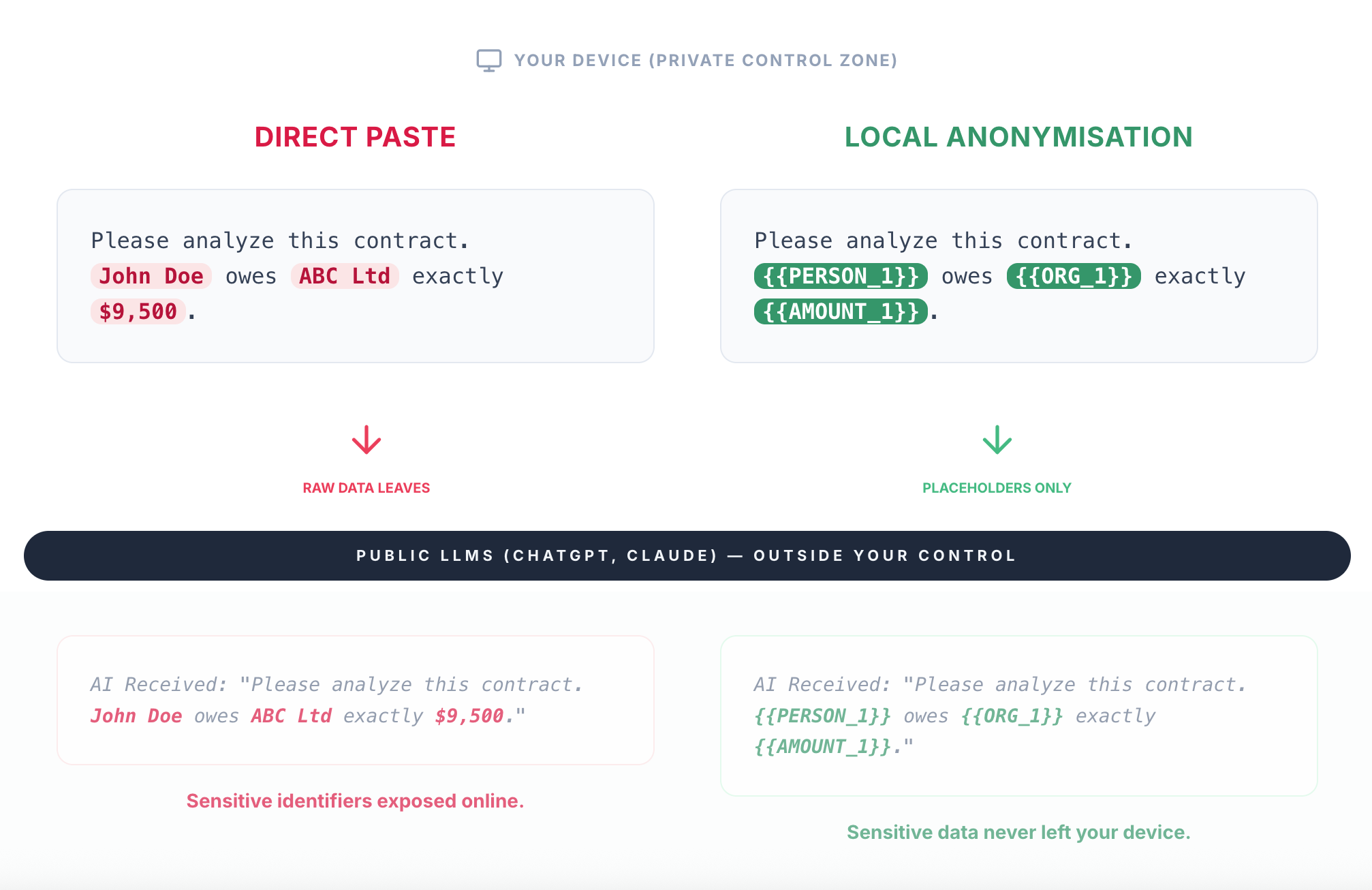

Directly pasting text into public LLMs sends raw data outside your control. Anonymising locally ensures only placeholders ever leave your device.

What Counts as Client or Sensitive Data?

Many people underestimate this. Client or sensitive data includes:

- Names and contact details

- Email conversations

- Contracts and agreements

- Invoices and pricing

- Internal notes about clients

- HR or employee information

- Any document tied to a real person or company

If the data isn't public, assume it's sensitive.

Real Incidents: This Has Already Happened

These risks are not hypothetical.

1. Samsung engineers leaked confidential data via ChatGPT

In 2023, engineers at Samsung pasted internal source code and proprietary documents into ChatGPT while troubleshooting. The data was unintentionally shared with an external AI service, prompting Samsung to ban public AI tools internally.

This wasn't a breach. It was normal employees doing normal work—and that's exactly why it matters.

2. Stolen ChatGPT credentials exposed full chat histories

Security researchers later documented cases where malware ("info-stealers") infected employee devices and stole ChatGPT login credentials. Attackers were then able to log in and access entire chat histories, sometimes spanning months.

If raw client data had been pasted over time, all of it became visible.

Even if the AI provider is secure, your account might not be. Chat history becomes a long-term liability.

3. Sensitive data may be retained or used for training

Many public AI services improve their models using user interactions unless users explicitly opt out or are on specific plans. This creates real uncertainty:

- Data may be retained longer than expected

- Historical prompts may persist

- Parts of your input may influence future model behaviour

For businesses, this raises a hard question: "Can we prove our client data was not reused beyond its original purpose?" If the answer is unclear, risk exists.

Why Big Companies Explicitly Ban This

Most large organisations now have AI usage policies. These almost always say:

- ❌ Do not input personal data

- ❌ Do not input client or confidential information

- ❌ Do not input proprietary documents

This isn't paranoia—it's risk management. Here are the real reasons behind those rules:

Reason 1: Loss of control over the data lifecycle

Once data enters a public AI tool: retention can't be audited, deletion can't be proven, and access can't be controlled. From a risk perspective, that alone is enough to say no.

Reason 2: Training and reuse uncertainty

Even with opt-out settings, employees may not configure them correctly, accounts may differ, and historical data may still exist. Companies therefore assume: "If we can't prove it wasn't reused, we treat it as exposed."

Reason 3: Account compromise multiplies the impact

As shown by real malware cases, if an AI account is compromised: attackers see everything, old prompts resurface, and sensitive data compounds over time. This is why companies aim to prevent sensitive data from entering the system at all.

Reason 4: Legal, contractual, and insurance exposure

After an incident, companies are asked: Did you follow internal policy? Did you apply reasonable safeguards? Was the risk preventable? "Everyone does it" is not an acceptable answer.

Why "Just Don't Paste Sensitive Data" Fails in Practice

Small teams already know this rule doesn't work. It fails because:

- ChatGPT is genuinely useful

- Manual redaction is slow and error-prone

- People miss small details under pressure

- Shadow AI usage happens anyway

Blanket bans reduce visibility—not usage.

A Practical, Defensible Workflow

Instead of asking "Can I paste this?", many teams ask: "Can I remove identifying details before using AI?"

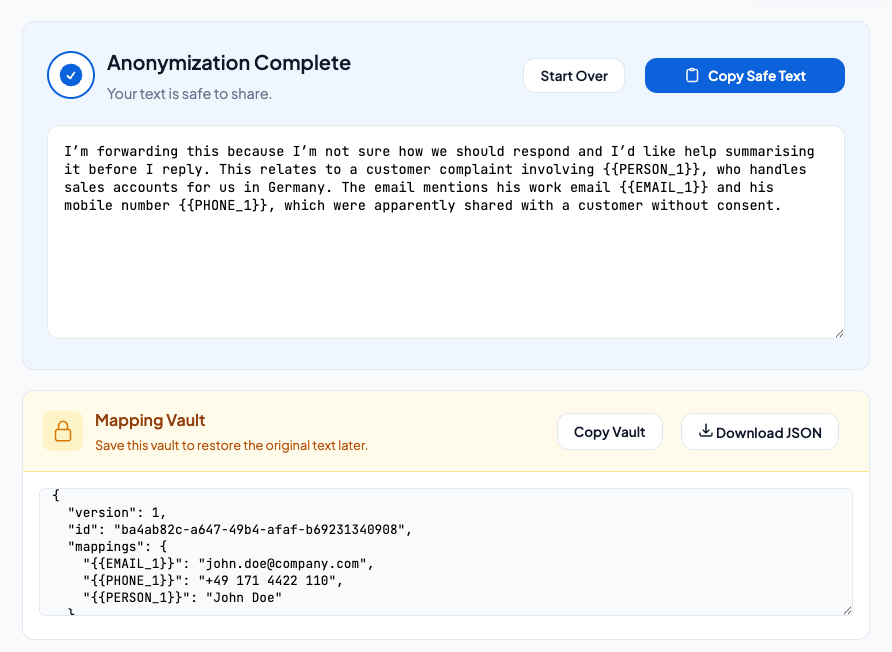

A realistic workflow looks like this:

- Detect sensitive information locally

- Replace it with placeholders (e.g. [PERSON_1], [ORG_1], [EMAIL_1])

- Send only anonymised text to ChatGPT

- Keep the original data on your device

- Revert the placeholders back to the original data locally

This way: sensitive information never leaves your device, productivity is preserved, and the workflow aligns with how large companies manage AI risk.

Example: Sensitive details are replaced locally before the text is copied into ChatGPT. The original information never leaves your device.

Why Manual Redaction Isn't Enough

People often miss:

- Repeated references throughout a document

- Tables and footnotes

- Contextual identifiers (job titles that reveal people)

- Embedded personal data in file metadata

Automation helps reduce human error—especially when working quickly.

When Is ChatGPT Generally Safe to Use?

ChatGPT is usually fine for:

- Public information

- Generic templates

- Fully anonymised text

- Hypothetical examples

- Brainstorming and ideation

Risk starts when real, identifiable data enters the prompt. Keep it anonymous and you're on solid ground.

The Bottom Line

- ❌ Real companies have already leaked data via ChatGPT

- ❌ Chat history can become a liability if accounts are compromised

- ❌ Model training and retention create long-term uncertainty

- ✅ Anonymising data before AI use is a practical, defensible compromise

You don't need enterprise AI plans to be responsible—but you do need better data hygiene.

Try PromptSafe

Anonymize sensitive data locally before using ChatGPT or Claude. Your documents never leave your device.

Try PromptSafe Free →The safest AI data is not the data that's "securely stored."

It's the data that never enters the system at all.